All of the REST APIs call presented below use bearer tokens for authorization. The {prefix} of each API is configurable in the hosted servers. This protocol is inspired by Delta Sharing.

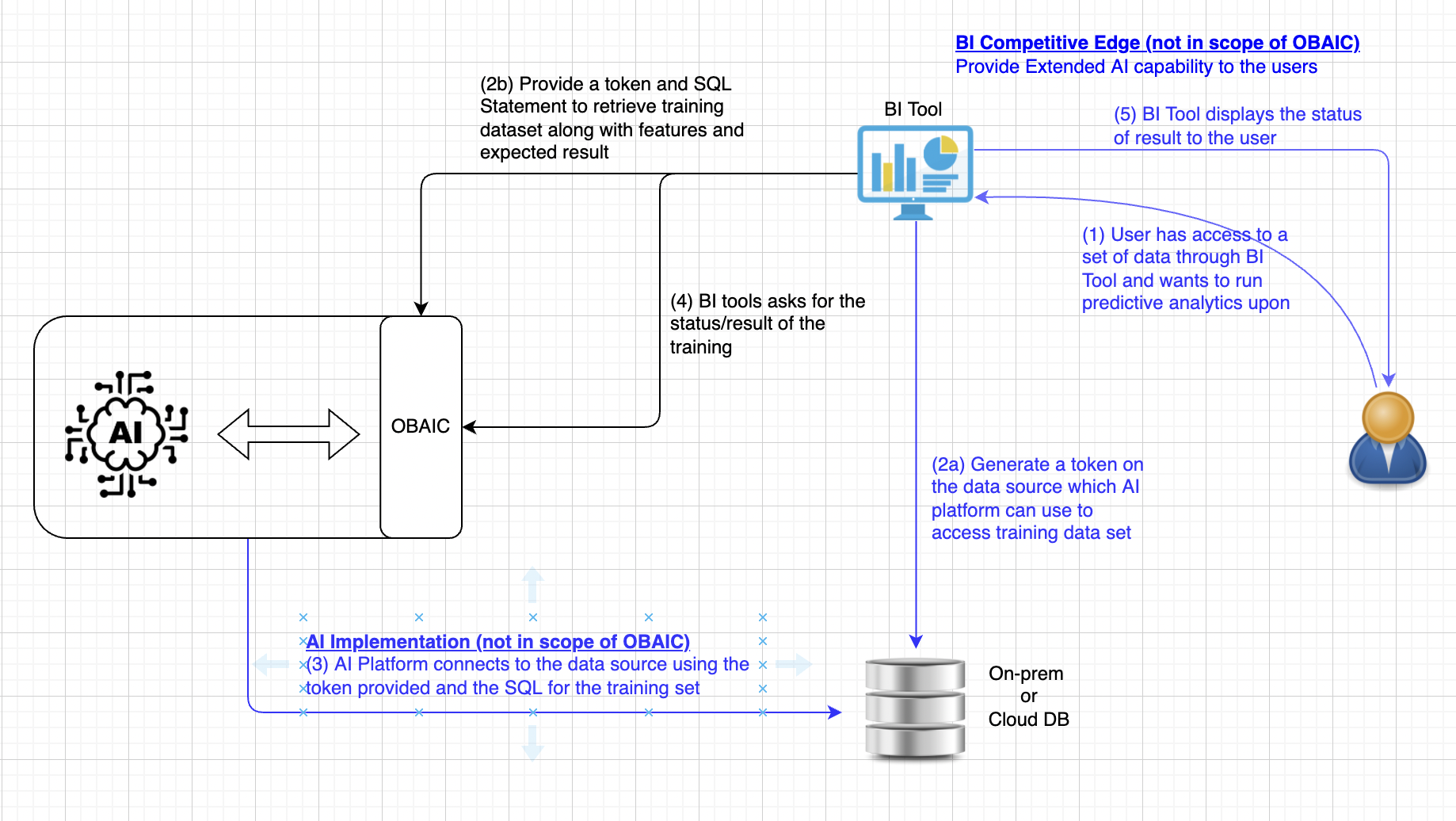

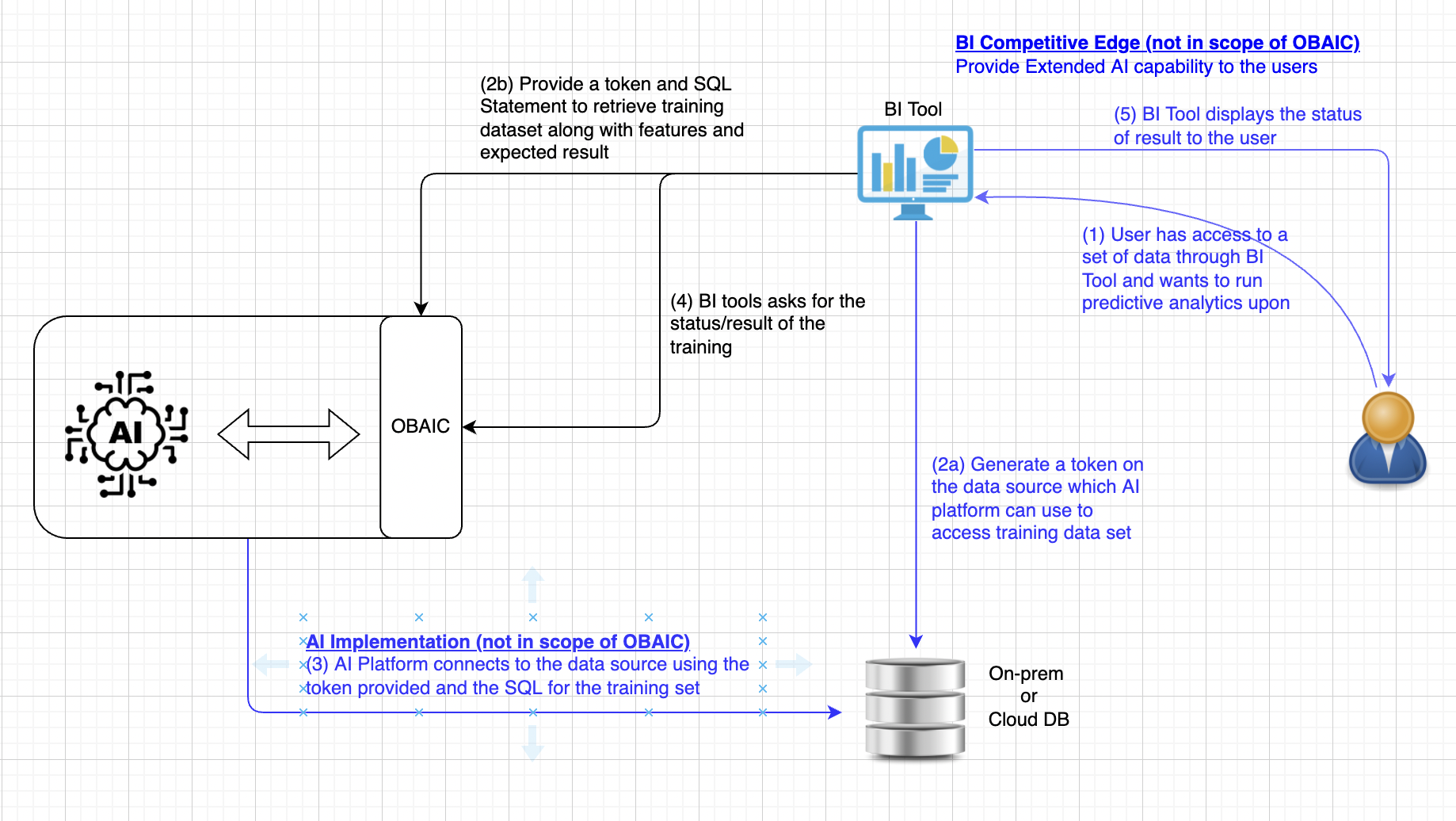

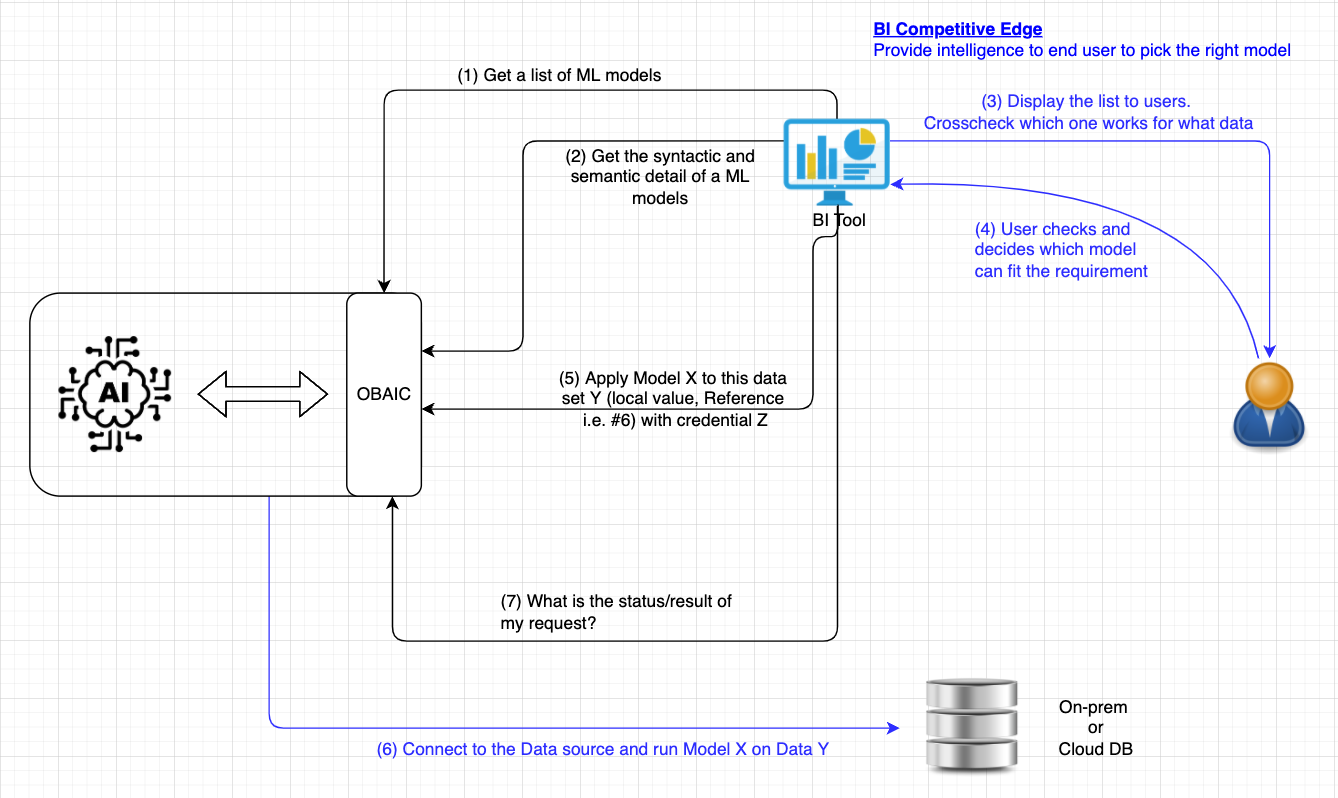

* Blue text below, either in the diagram or in the description, means it's out of scope of OBAIC and it's up to the BI tool, AI Platform or Data source vendor to implement. OBAIC is the connecting tissue to coordinate the communications among them to extend the capability of these 3 major components

(a) Obtain a token a token with permission associated to the user making the request. This token is going to pass to AI allowing the access to the training data with a SQL statement running against the datastore. (b) BI tool, on behalf of the user, requests AI platform through OBAIC, to train/prepare a model that accepts features of a certain type (numeric, categorical, text, etc.)

Model configuration is based on configs from the open-source Ludwig project. At a minimum, we should be able to define inputs and outputs in a fairly standard way. Other model configuration parameters are subsumed by the options field. The data stanza provides a bearer token allowing the ML provider to access the required data table(s) for training. The provided SQL query indicates how the training data should be extracted from the source. Don't be confused with the Bearer token which is used to authenticate with OBAIC, and the dbToken which is created in 2(a) and AI platform will use that to access the data source for training

|

If we go beyond just REST API, SQL-like is an alternative as the syntax is also well-known Use BigQuery ML model creation as an example and generalizing

|

|

BI tool polls for the status or retrieve the training result. If the training is still in progress, the status will be returned. When training is completed, results and performance of the model will be returned.

|

|

1. BI Tool asks for a list of available model

|

|

Example:

{

"models": [

{

"name": "Model 1",

"id": "6d4b571a-80ca-41ef-bc67-b158f4352ad8"

},

{

"name": "Model 2",

"id": "70d9ab9d-9a64-49a8-be4d-d3a678b4ab16"

},

{

"name": "Model 3",

"id": "99914a97-5d2e-4b9f-b81a-1d43c9409162"

},

{

"name": "Model 4",

"id": "8295bfda-7901-43e8-9d31-81fd1c3210ee"

},

{

"name": "Model 5",

"id": "0693c224-3a3f-4fe7-bbbe-c70f93d15f12"

}

],

"nextPageToken": "3xXc4ZAsqZQwgejt"

} |

2. Get Model details

|

|

Example:

{

"id": "6d4b571a-80ca-41ef-bc67-b158f4352ad8",

"name": "Model 1",

"revision": 3,

"format": {

"name": "PMML",

"version": "4.3"

},

"algorithm": "Neural Network",

"tags": [

"Anomaly detection",

"Banking"

],

"dependency", "",

"creator": "John Doe",

"description": "This is a predictive model, refer to {input} and {output} for detailed format of each field, such as value range of a field, as well as possible predictions the model will gave. You may also refer to the example data here.",

"input": {

"fields": [

{

"name": "Account ID",

"opType": "categorical",

"dataType": "string",

"taxonomy": "ID",

"example": "account abc-001",

"allowMissing": false,

"description": "unique value"

},

{

"name": "Account Balance",

"opType": "continuous",

"dataType": "double",

"taxonomy": "currency",

"example": "1,378,560.00",

"allowMissing": true,

"description": "Minimum: 0, Maximum: 999,999,999.00"

},

],

"ref": "http://dmg.org/pmml/v4-3/pmml-4-3.xsd"

}

"output": {

"fields": [

{

"name": "Churn",

"opType": "continuous",

"dataType": "string",

"taxonomy": "ID",

"example": "0.67",

"allowMissing": false,

"description": "the possibility of the account stop doing business with a company over 6 months"

}

],

"ref": "http://dmg.org/pmml/v4-3/pmml-4-3.xsd"

}

"performance": {

"metric": "accuracy",

"value": 0.85

},

"rating": 5,

"url": "uri://link_to_the_model"

} |

|

|

|

|

errorCode and message for each API callName | Affiliation |

|---|---|

| Cupid Chan | Pistevo Decision |

| Xiangxiang Meng | Redfin |

| Deepak Karuppiah | MicroStrategy |

| Nancy Rausch | SAS |

| Dalton Ruer | Qlik |

| Sachin Sinha | Microsoft |

| Yi Shao | IBM |

| Jeffrey Tang | Predibaes |

| Lingyan Yin | Salesforce |

Get core evaluation metrics for a trained model.

function GetModelMetrics(UUID) -> Metrics |

Example response:

{ |

function PredictWithModel(UUID, dataConfig) -> Predictions |

Example params

{ |

A very similar data stanza to the train request, designating the feature data on which to predict.

Example response (as JSON here for convenience, not necessarily for large responses):

{ |

Note that directly returning a large response set is not a good idea. In practice, the results could be streamed through something like a persistent socket connection.