Overview

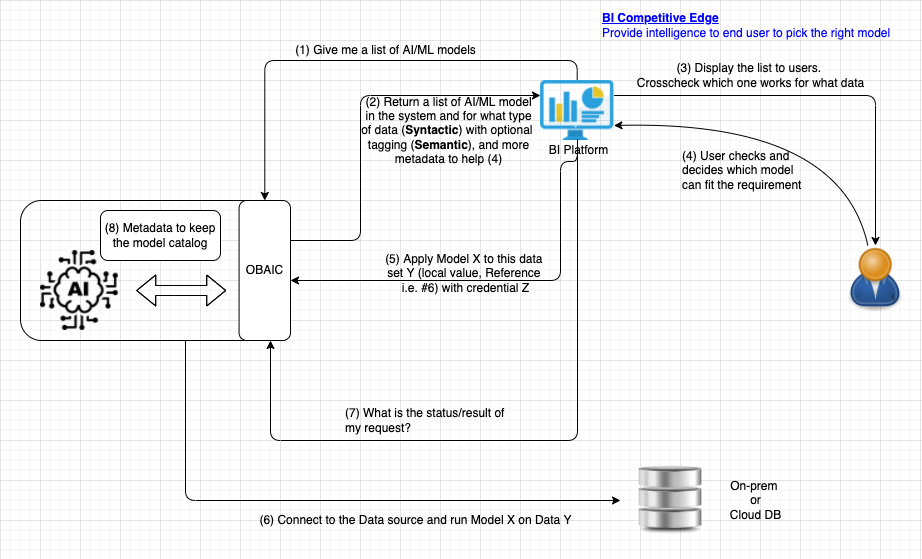

- Open Business and Artificial Intelligence Connectivity (OBAIC) borrows the concept from Open Database Connectivity (ODBC), which is an interface that makes it possible for applications to access data from a variety of database management systems (DBMSs). The aim of OBAIC is to make it as the interface that makes it possible for BI tools to access machine learning model from a variety of AI platform - “AI ODBC for BI”

- Through OBAIC, BI vendors can connect to any AI platform freely without concerning about the underlying implementation and how does the AI platform execute train the model or infer the result. It's just like what we used to have for database with ODBC that it's up to how the database store the data and execute the query.

- The committee has decided this standard will only define the REST APIs protocol of how AI and BI communicates, initiated from BI to AI. The design or the actual implementation of OBAIC, such as whether this should be Server VS Server-less VS Docker, will leave it up to the vendor to provide, or if this protocol grows to another open-sourced project to provide such implementation.

- There are 3 key aspects when designing this standard

- BI - what specific call do I need this standard to provide so that I can better leverage any underlying AI/ML framework?

- AI - what should be the common denominator an AI framework should provide to support this standard?

- Data - Shall data be moved around in the communication between AI and BI (passed by value) or keep the data in the same location (passed by reference)?

Scope

- We understand that there are 2 key steps in machine learning - Model Training and Result Inference. In this first release of this protocol, we will only focus on inference. Training is provided here but it's subjected to more discussion.

Overall Flow

REST APIs

All of the REST APIs call presented below use bearer tokens for authorization. The {prefix} of each API is configurable in the hosted servers. This protocol is inspired by Delta Sharing.

List Models - Step (1)

| HTTP Request | Value |

|---|---|

| Method |

|

| Header |

|

| URL |

|

| Query Parameters | maxResults (type: Int32, optional): The maximum number of results per page that should be returned. If the number of available results is larger than pageToken (type: String, optional): Specifies a page token to use. Set |

Example:

Error - Apple to all API calls above

Authors

Future Enhancement

- Define data pipeline to transform data before running

- Containerized Model so that prediction can run in BI instead of in AI

- nextPageToken format

References

- Tableau version of OBAIC https://tableau.github.io/analytics-extensions-api/docs/ae_example_tabpy.html

- Qlik version of OBAIC: https://github.com/qlik-oss/server-side-extension

- Delta Sharing: https://github.com/delta-io/delta-sharing/blob/main/PROTOCOL.md#delta-sharing-protocol

Decision to be made

- Data file type: What type of data we are supporting: e.g. for Delta needs to be parquet, RDBMS? Can modify the Jeffrey init cut below to support multiple data types, depending on the use case.

- Inference: Pass by value should be good enough if it's only for predicting

- Train: not immediate, maybe later in Phase 2

- Metadata structure, what kind of JSON schema do we need

- Do we only support a specific model type (ONNX) or arbitrary number of framework

- Decouple model (asking the model to predict and train) and data (listing, upload, download)

- Finalize Logo

FAQ

Why should I share our model to you?

Ownership? Model and Data?

Security?

How can the data be accessed mechanically, for training?

This is a short doc illustrating a sample skeleton OBAIC protocol. This proposal envisions a data-centric workflow:

- BI vendor has some data on which predictive analytics would be valuable.

- BI vendor requests AI vendor (through OBAIC) to train/prepare a model that accepts features of a certain type (numeric, categorical, text, etc.)

- BI vendor gives AI vendor a token to allow access to the training data with the above features. A SQL statement is a natural way to specify how to retrieve data from the datastore.

- When model is trained, BI vendor can see the results of training (e.g., accuracy).

- AI vendor provides predictions on data shared by BI vendor, again using an access token.

API

Train a New Model

function TrainModel(inputs, outputs, modelOptions, dataConfig) -> UUID |

Example params:

{ “providerSpecificOption”: “value” }, |

Model configuration is based on configs from the open-source Ludwig project. At a minimum, we should be able to define inputs and outputs in a fairly standard way. Other model configuration parameters are subsumed by the options field.

The data stanza provides a bearer token allowing the ML provider to access the required data table(s) for training. The provided SQL query indicates how the training data should be extracted from the source.

Example response:

{ |

Consider also a fully SQL-like interface taking BigQuery ML model creation as an example and generalizing:

CREATE MODEL ( AS (SELECT foo FROM BAR) |

List Models

function ListModels() -> List[UUID, Status] |

Example response:

{ |

Show Model Config

function GetModelConfig(UUID) -> Config |

Example response:

{ |

The response here is essentially a pared-down version of the original training configuration.

Get Model Status

function GetModelStatus(UUID) -> Status |

Example response:

{ |

Get Model Metrics

Get core evaluation metrics for a trained model.

function GetModelMetrics(UUID) -> Metrics |

Example response:

{ |

Predict Using Trained Model

function PredictWithModel(UUID, dataConfig) -> Predictions |

Example params

{ |

A very similar data stanza to the train request, designating the feature data on which to predict.

Example response (as JSON here for convenience, not necessarily for large responses):

{ |

Note that directly returning a large response set is not a good idea. In practice, the results could be streamed through something like a persistent socket connection.