...

All of the REST APIs call presented below use bearer tokens for authorization. The {prefix} of each API is configurable in the hosted servers. This protocol is inspired by Delta Sharing.

...

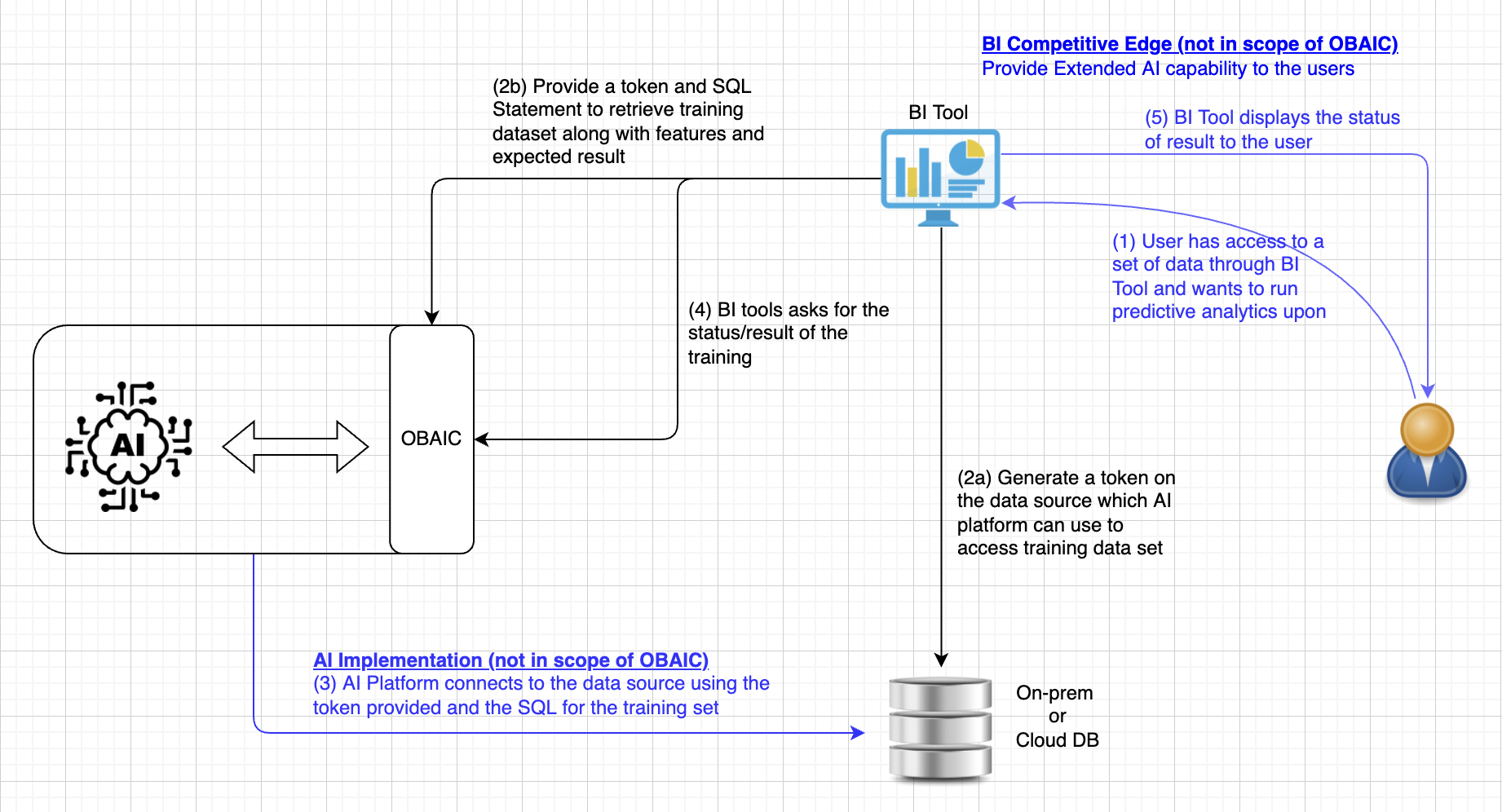

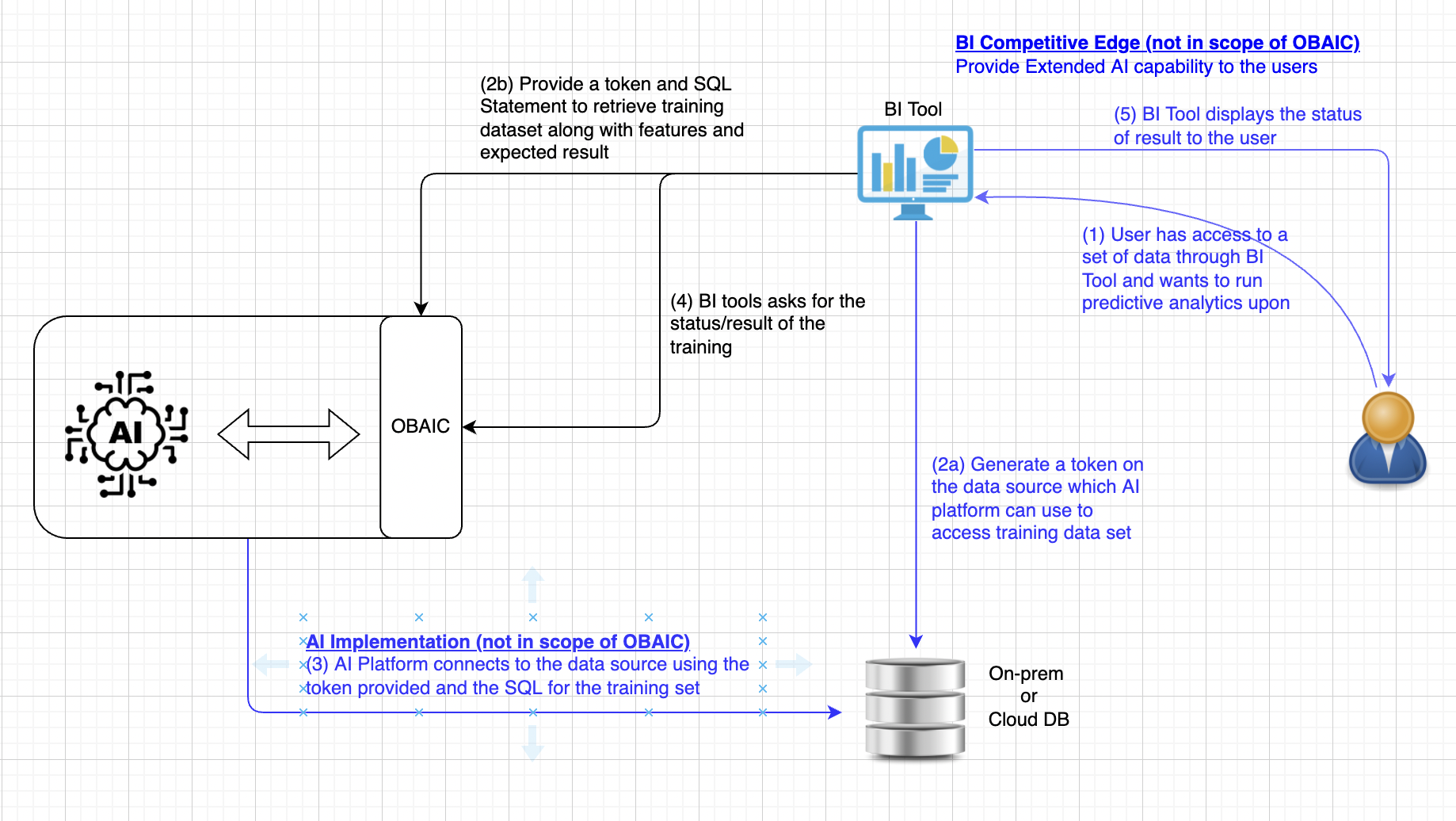

Protocol - Training

* Blue text below, either in the diagram or in the description, means it's out of scope of OBAIC and it's up to the BI tool, AI Platform or Data source vendor to implement. OBAIC is the connecting tissue to coordinate the communications among them to extend the capability of these 3 major components

- Not in the scope of OBAIC - User analyzes data using BI Tools and found out that predictive analytics on those data set would be valuable. This step is the traditional step when a user interacts with BI.

(a) Obtain a token a token with permission associated to the user making the request. This token is going to pass to AI allowing the access to the training data with a SQL statement running against the datastore. (b) BI tool, on behalf of the user, requests AI platform through OBAIC, to train/prepare a model that accepts features of a certain type (numeric, categorical, text, etc.)

Expand title API to train model using provided dataset Model configuration is based on configs from the open-source Ludwig project. At a minimum, we should be able to define inputs and outputs in a fairly standard way. Other model configuration parameters are subsumed by the options field.

The data stanza provides a bearer token allowing the ML provider to access the required data table(s) for training. The provided SQL query indicates how the training data should be extracted from the source.

Don't be confused with the Bearer token which is used to authenticate with OBAIC, and the dbToken which is created in 2(a) and AI platform will use that to access the data source for training

HTTP Request Value Method POSTHeader Authorization: Bearer {token}URL {prefix}/models/Query Parameters {"dbToken": "D41C4A382C27A4B5DF824E2D4F148";

"inputs":[

{

"name":"customerAge",

"type":"numeric"

},

{

"name":"activeInLastMonth",

"type":"binary"

}

],

"outputs":[

{

"name":"canceledMembership",

"type":"binary"

}

],

"modelOptions": {“providerSpecificOption”: “value”},

"data":{

"sourceType":"snowflake",

"endpoint":"some/endpoint",

"bearerToken":"...",

"query":"SELECT foo FROM bar WHERE baz"

}

}Expand title Alternatively, we may also consider to support SQL-like syntax for Model Training If we go beyond just REST API, SQL-like is an alternative as the syntax is also well-known

Use BigQuery ML model creation as an example and generalizing

CREATE MODEL (

customerAge WITH ENCODING (

type=numeric

),

activeInLastMonth WITH ENCODING (

type=binary

),

canceledMembership WITH DECODING (

type=binary

)

)

FROM myData (

sourceType=snowflake,

endpoint="some/endpoint",

bearerToken=<...>,

)AS (SELECT foo FROM BAR)

WITH OPTIONS ();Expand title 200: Training is started and the corresponding ID is return for future reference HTTP Response Value Header Content-Type: application/json; charset=utf-8Body {"modelID": "d677b054-2cd4-4711-959b-971af0081a73"}modelIDis generated and returned to the caller if training is started successfully. This will be used to check the status of the training, or for future Inference (see Inference section below)

- Not in the scope of OBAIC - AI Platform provider the implementation to fulfill the request by connecting to the datasource with the provided token and the set of training data specified in SQL. This step is up to how the AI platform interacts with the data source to performance the training.

BI tool polls for the status or retrieve the training result. If the training is still in progress, the status will be returned. When training is completed, results and performance of the model will be returned.

Expand title API to get model status HTTP Request Value Method GETHeader Authorization: Bearer {token}URL {prefix}/modelStatus?modelID=Query Parameters modelID (type: String): The modelID returned from previous OBAIC call either from training or list of Models.

Expand title 200: Status of the Model returned HTTP Response Value Header Content-Type: application/json; charset=utf-8Body {"modelID": "d677b054-2cd4-4711-959b-971af0081a73","status": "training","progress": "80",}modelIDis same ID provided in the requeststatuscan be training | inferencing | readyprogressis the estimated progress of the current status

- Not in the scope of OBAIC - BI tool presents the result to the user in their own way, which is the "secret sauce" and unique to each other.

...

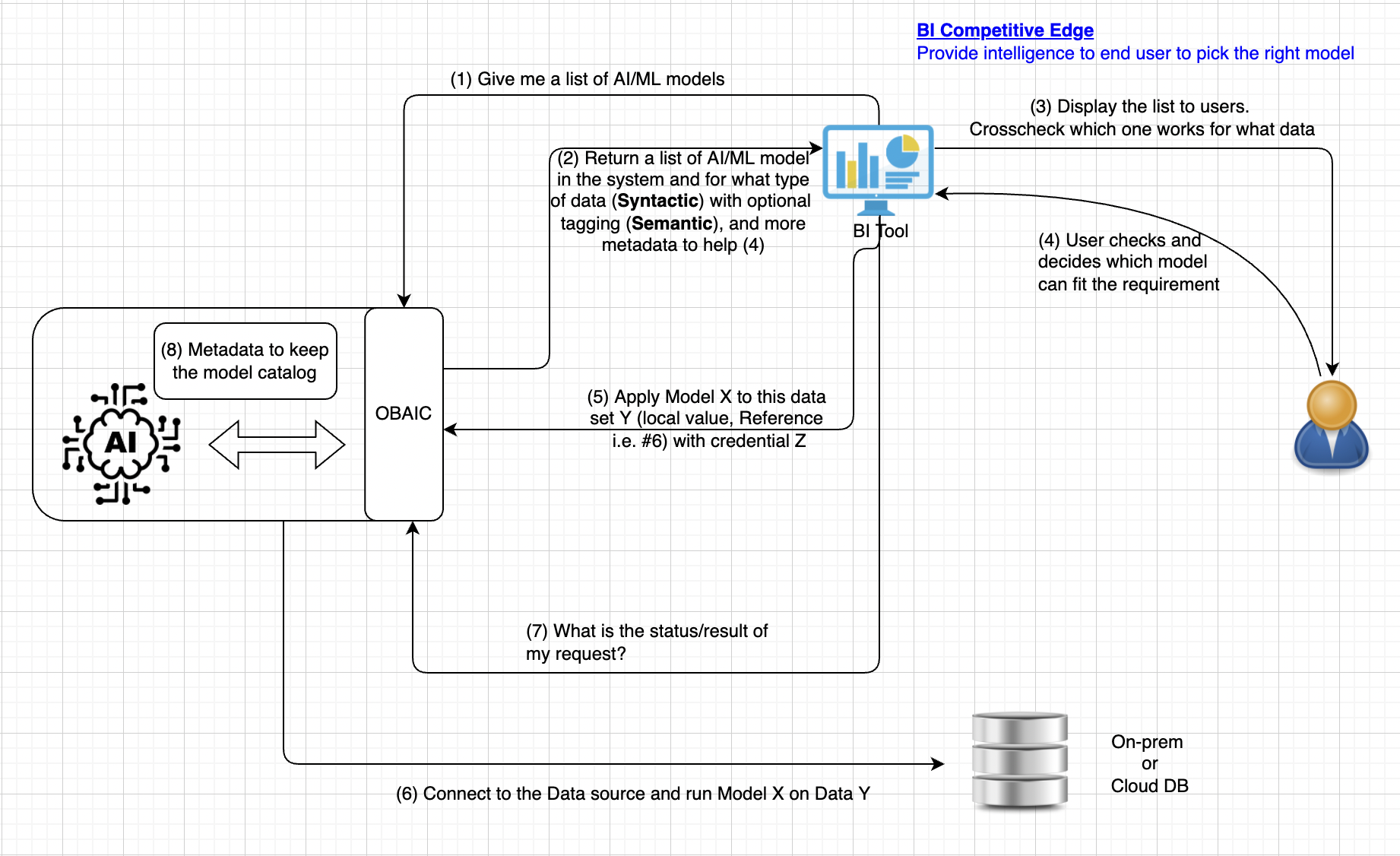

Protocol - Inference

List Models - Step (1)

| Expand | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| ||||||||||

|

...