History

...

- Started. 2020-04-14

...

About

About

- Drafted. 2020-04-27

TAG LINE

NNStreamer: Efficient and flexible stream pipeline framework for complex neural network applications

About

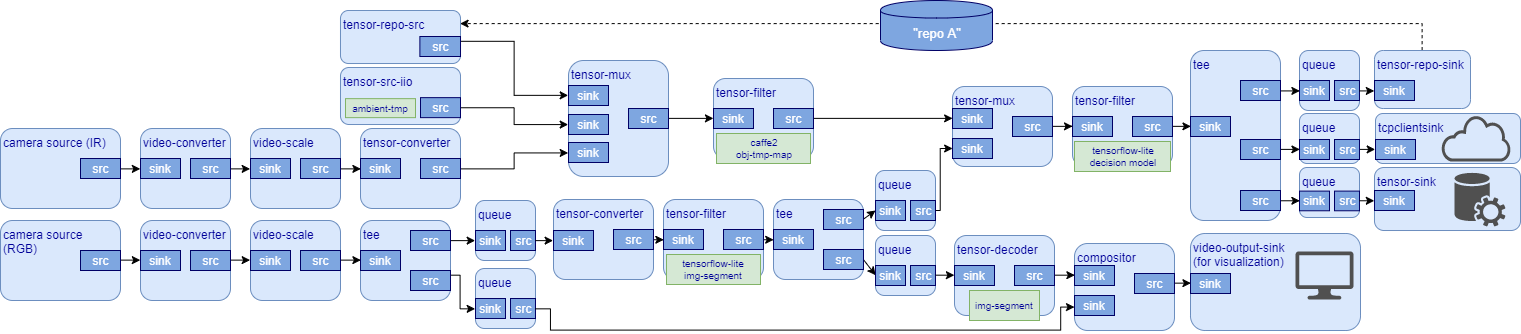

NNStreamer, a Linux Foundation AI Foundation open source NNStreamer, an incubating LF AI Foundation project, is an efficient and flexible stream pipeline framework for complex neural network applications, which was developed and open-sourced by Samsung.

NNStreamer provides a set of GStreamer plugins so that developers may apply neural networks, attach related frameworks (including ROS, IIO, FlatBuffers, and Protocol Buffers), and manipulate tensor data streams in GStreamer pipelines easily and execute such pipelines efficiently. It has already been adopted by various Android and Tizen devices in Samsung

Samsung, which implies that it is reliable and robust enough for commercial products.

It supports well-known neural network frameworks including Tensorflow, Tensorflow-lite, Caffe2, PyTorch, OpenVINO, ARMNN, and NEURUN. Users may include custom C functions, C++ objects, or Python objects as well as such frameworks as neural network filters of a pipeline in run-time. Users may add and integrate supports for such frameworks or hardware AI accelerators in run-time, which may exist as independent plugin binaries.

NNStreamer's official binary releases include supports for Tizen, Ubuntu, Android, macOS, and Yocto/OpenEmbedded; however, as long as the target system supports Gstreamer, it should be compatible with NNStreamer as well. We provide APIs in C, Java, and .NET in case GStreamer APIs are overkill. NNStreamer APIs are the standard Machine Learning APIs of Tizen and various Samsung products as well.

(( designers may need to retouch the diagram. ))

Open Source

Open Source

NNStreamer was open-sourced in 2018 on GitHub,

Join

It is actively developed since then and has a few sub projects. NNStreamer has joined LF AI Foundation in April 2020.

We invite you to visit the GitHub where NNStreamer and its sub projects are developed. Please join our community as a user and contributor. Your contribution is always welcomed!Join the Conversation and Contribute

Get Started

Get Started

On UbuntuUbuntu (16.04/18.04)

- sudo add-apt-repository ppa:nnstreamer/ppa

- sudo apt-get update

- sudo apt-get install nnstreamer nnstreamer-caffe2 nnstreamer-tensorflow nnstreamer-tensorflow-lite#

Now, you are ready to use nnstreamer as GStreamer plugins!

...

- Add a link on more detailed description.

- <<<NOTE>>> It would be better if we can give with https://github.com/nnstreamer/nnstreamer/issues/2271 or a gst-launch example

On Tizen

...

Tizen 5.5 or higher

...

Use Machine-Learning

...

Inference APIs (

...

Native / .NET) to use NNStreamer in TIzen applications.

Android

Use JCenter repository to use NNStreamer in Android Studio.

Yocto

OpenEmbedded's meta-neural-network layer has NNStreamer included.

MacOS

McOS users may install NNStreamer via Brew taps or build NNStreamer for their own systems.

Other

In general, you may build NNStreamer in any GStreamer-compatible systems.

Usage Examples

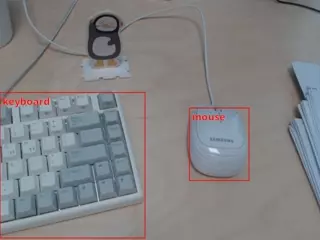

Applications of these screenshots require very short lines of code (click screenshots to look at) and run efficiently in inexpensive embedded devices. They can even be implemented as single-line bash shell scripts with NNStreamer.

Example applications are located at GitHub, nnstreamer-example.git and the Wiki page.

Join the Conversation

NNStreamer maintains three mailing lists. You are invited to join the one that best meets your interest.

NNStreamer-Announce: Top-level milestone messages and announcements

NNStreamer-Technical-Discuss: Technical discussions

NNStreamer-TSC: Technical governance discussions

On Android

- @TODO@ Add how to use nnstreamer JCenter/JFrog repo.

On Yocto/OpenEmbedded

- @TODO@ Add how to use meta-neural-network.

Using on macOS

- @TODO@ Add how to use w/ homebrew.

Build for your own system and frameworks ==> Just add a link.