Current state: ["Under DiscussionApproved"]

ISSUE: #17599

PRs:

Keywords: search, range search, radius, low bound, high boundrange filter

Released:

Summary(required)

At presentBy now, the behavior of of "search" in Milvus is to return returning TOPK most similar sorted results for each queried vector be searched.

This MEP is about how to realize a functionality named "range search" functionality. User specifies a range scope -- including radius low bound and radius high boundand range filter (optional), Milvus does "range search" to get TOPK , and also returns TOPK sorted results with distance falling in this range scope.

- both radius low bound and radius high bound param "radius" MUST be set, param "range filter" is optionalrange search will return TOPK results with

- for L2: low_bound <= distance < high_bound for IP: low_bound < distance <= high_bound

- falling in range scope means

| Metric type | Behavior |

|---|---|

| IP | radius < distance <= range_filter |

| L2 and other metric types | range_filter <= distance < radius |

Motivation(required)

Many users request Milvus to realize a the "range search" functionality. With this capability, user can

- get results with distance falling in a range scope

- get arbitrary TOPK search results more than 16384

...

We reuse the SDK interface Search() to do "range search". Add Only add 2 more parameters "radius_low_bound" and "radiusrange_high_boundfilter" into params.

When When param "radius_low_bound" and "radius_high_bound" are " is specified, Milvus does range search; otherwise, Milvus does search.

As shown in the following figure, set "radiusrange_low_boundfilter": 1.0, "radius_high_bound": 2.0 in search_ params.params.

| Code Block | ||

|---|---|---|

| ||

default_index = {"index_type": "HNSW", "params":{"M": 48, "efConstruction": 500}, "metric_type": "L2"}

collection.create_index("float_vector", default_index)

collection.load()

search_params = {"metric_type": "L2", "params": {"ef": 32, "radiusrange_low_boundfilter": 1.0, "radius_high_bound": 2.0}}

res = collection.search(vectors[:nq], "float_vector", search_params, limit, "int64 >= 0") |

- Add 3 new interfaces in knowhere

| Code Block | ||

|---|---|---|

| ||

// range search parameter legacy check virtual bool CheckRangeSearch(Config& cfg, const IndexType type, const IndexMode mode); // range search virtual DatasetPtr QueryByRange(const DatasetPtr& dataset, const Config& config, const faiss::BitsetView bitset); // brute force range search static DatasetPtr BruteForce::RangeSearch(const DatasetPtr base_dataset, const DatasetPtr query_dataset, const Config& config, const faiss::BitsetView bitset); |

Design Details(required)

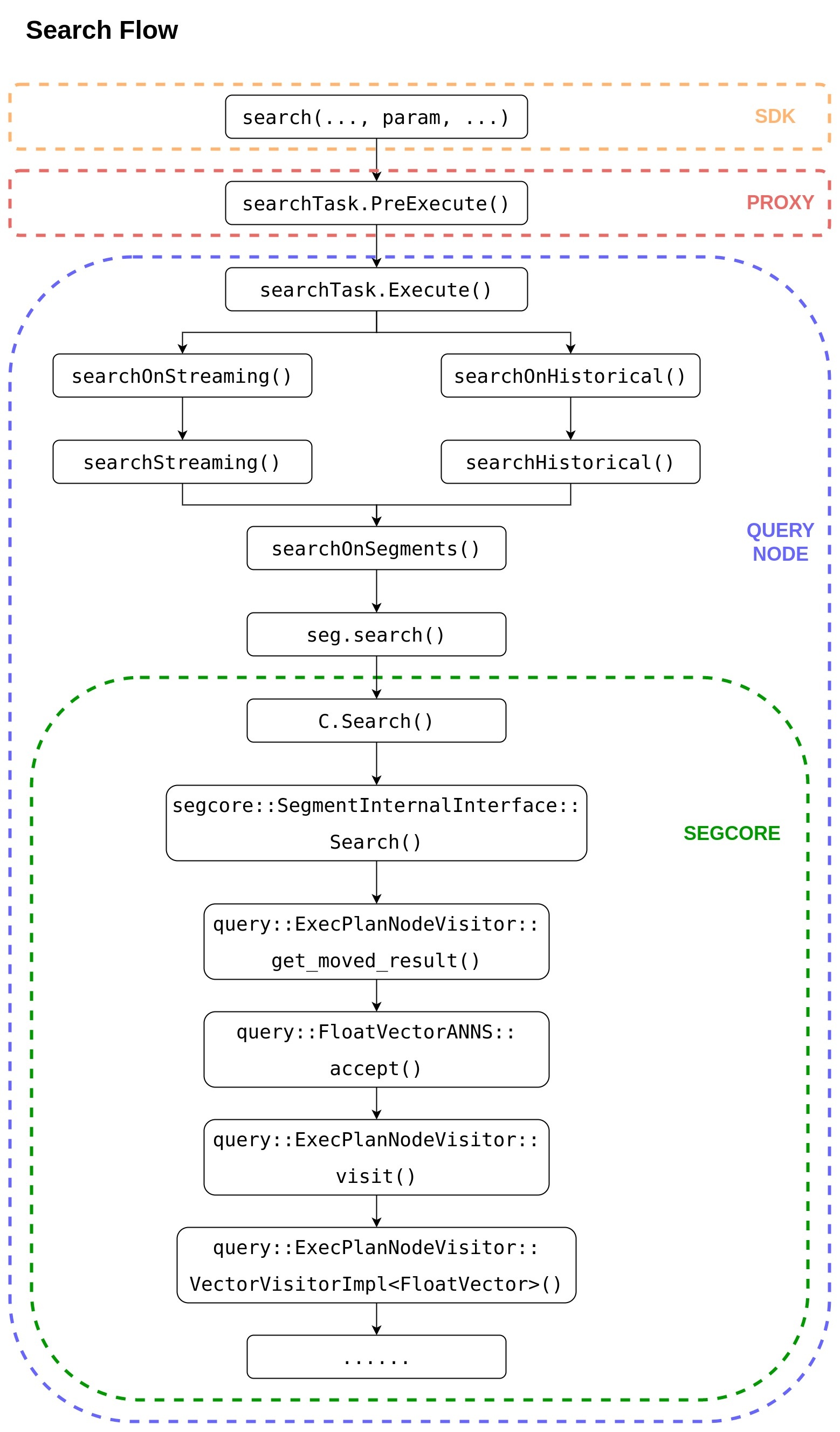

Range search completely reuses the call stack from SDK to segcore, then to knowhere.

Range search only needs to put the parameter "radius_low_bound" and "radius_high_bound" into the search parameter "param" from the SDK.

...

Knowhere

Index types and metric types supporting range search are listed below:

| IP | L2 | HAMMING | JACCARD | TANIMOTO | SUBSTRUCTURE | SUPERSTRUCTURE | |

|---|---|---|---|---|---|---|---|

| BIN_IDMAP | |||||||

| BIN_IVF_FLAT | |||||||

| IDMAP | |||||||

| IVF_FLAT | |||||||

| IVF_PQ | |||||||

| IVF_SQ8 | |||||||

| HNSW | |||||||

| ANNOY | |||||||

| DISKANN |

If call range search API with unsupported index types or unsupported metric types, exception will be thrown out.

In Knowhere, two new parameters "radius" and "range_filter" are passed in via config, and range search will return all un-sorted results with distance falling in this range scope.

| Metric type | Behavior |

|---|---|

| IP | radius < distance <= range_filter |

| L2 or other metric types | range_filter <= distance < radius |

Knowhere run range search in 2 steps:

- pass param "radius" to thirdparty to call their range search APIs, and get result

- if param "range_filter " is set, filter above result and return; if not, return above result directly

We add 3 new APIs CheckRangeSearch(), QueryByRange() and BruteForce::RangeSearch() to support range search.

- CheckRangeSearch()

This API is used to do range search parameter legacy check. It will throw exception when parameter legacy check fail.

The valid scope for "radius" is defined as: -1.0 <= radius <= float_max

| metric type | range | similar | not similar |

|---|---|---|---|

| L2 | [0, inf) | 0 | inf |

| IP | [-1, 1] | 1 | -1 |

| jaccard | [0, 1] | 0 | 1 |

| tanimoto | [0, inf) | 0 | inf |

| hamming | [0, n] | 0 | n |

2. QueryByRange()

This API returns all unsorted results with distance falling in the specified range scope.

| PROTO | virtual DatasetPtr |

INPUT | Dataset { Config { knowhere::meta::RADIUS: - knowhere::meta::RANGE_FILTER: - } |

OUTPUT | Dataset { |

LIMS is with length "nq+1", it's the offset prefix sum for result IDS and result DISTANSE. The length of IDS and DISTANCE are the same but variable.

Suppose N queried vectors are with label: {0, 1, 2, ..., n-1}

The result counts for each queried vectors are: {r(0), r(1), r(2), ..., r(n-1)}

Then the data in LIMS will be like this: {0, r(0), r(0)+r(1), r(0)+r(1)+r(2), ..., r(0)+r(1)+r(2)+...+r(n-1)}

The total range search result num is: LIMS[nq]

The range search result for each query vector is: IDS[lims[n], lims[n+1]) and DISTANCE[lims[n], lims[n+1])

The memory used for IDS, DISTANCE and LIMS are allocated in Knowhere, they will be auto-freed when Dataset deconstruction.

3. BruteForce::RangeSearch()

This API does range search for no-index dataset, it returns all unsorted results with distance "better than radius" (for IP: > radius; for others: < radius).

| PROTO | static DatasetPtr |

INPUT | Dataset { Dataset { Config { knowhere::meta::RADIUS: - knowhere::meta::RANGE_FILTER: - } |

OUTPUT | Dataset { |

The output is as same as QueryByRange().

Segcore

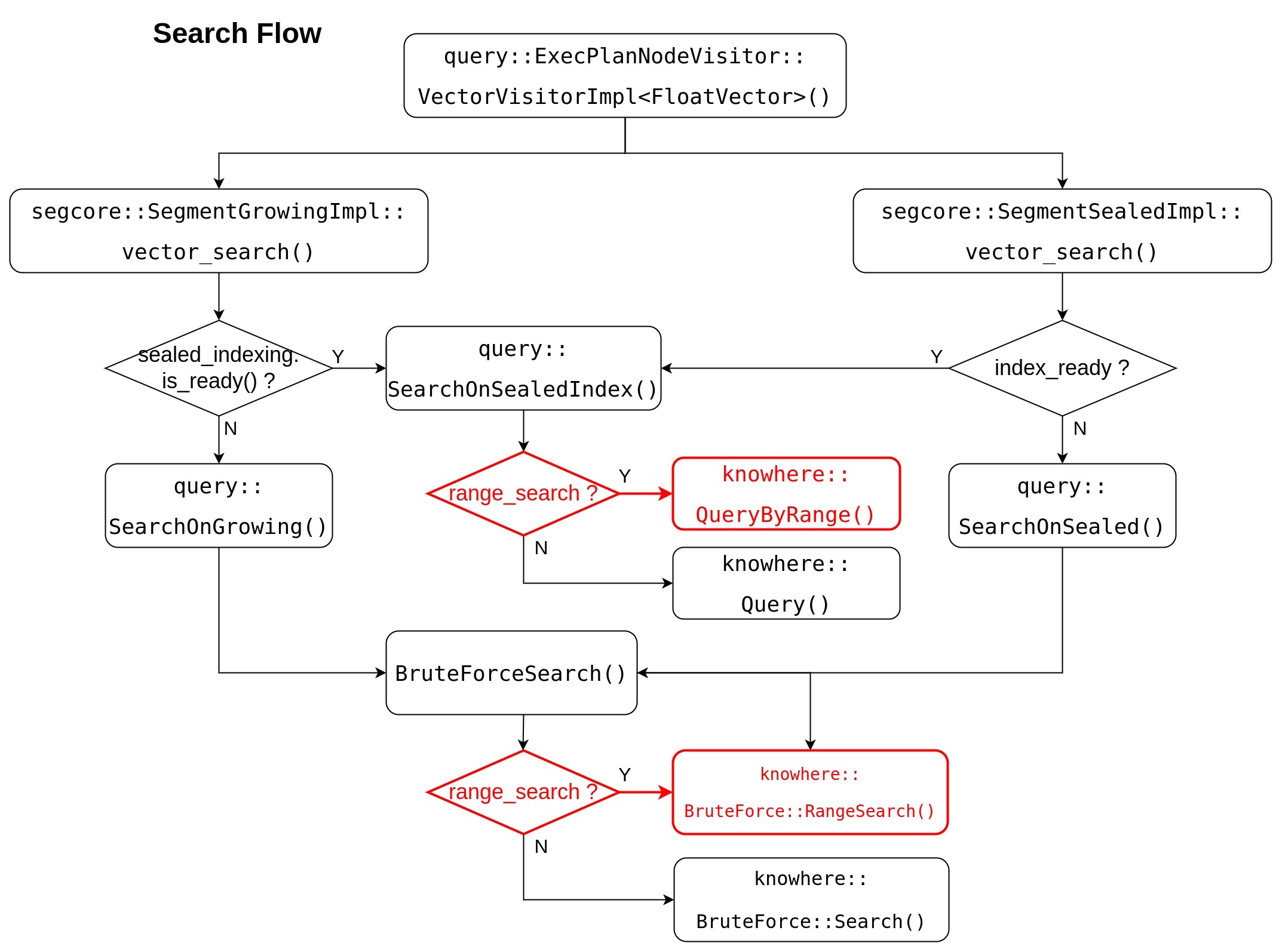

Segcore search flow will be updated as this flow chart, range search related change is marked RED.

Segcore uses radius parameter's existence to decide whether to run search, or to run range search.

- when param "radius

...

- " is set, range search is called;

- otherwise, search is called.

For API query::SearchOnSealedIndex() and BruteForceSearch(), they do like following:

- pass radius parameters (radius / range_filter) to knowhere

- get all unsorted range search result from knowhere

- for each NQ's results, do heap-sort

- return result Dataset with TOPK results

Whatever do range search or search, the output structure are same:

- query::SearchOnSealedIndex() => SearchResult

- BruteForceSearch() => SubSearchResult

Both SearchResult and SubSearchResult contain TOPK sorted result for each NQThe output of range search is exactly as same as the result of search.

- seg_offsets_: with length "nq * topk", -1 is filled in when no enough result data

- distances_: with lengh "nq * topk", data is undefined when no enough result data

Milvus

Range search completely reuses the call stack from SDK to segcore.

How to get more than 16384 range search results ?

With this solution, user can get maximum 16384 range search results in one call.

If user wants to get more than 16384 range search results, they can call range search multiple times with different range_filter parameter (use L2 as an example)

- NQ = 1

1st call with (range_filter = 0.0, radius = inf), get result distances like this:

{d(0), d(1), d(2), ..., d(n-1)}

2nd call with (range_filter = d(n-1), radius = inf), get result distances like this:

{d(n), d(n+1), d(n+2), ..., d(2n-1)}

3rd call with (range_filter = d(2n-1), radius = inf), get result distances like this:

{d(2n), d(2n+1), d(2n+2), ..., d(3n-1)}

...

- NQ > 1 (suppose NQ = 2)

1st call with (range_filter = 0.0, radius = inf), get result distances like this:

{d(0,0), d(0,1), d(0,2), ..., d(0,n-1), d(1,0), d(1,1), d(1,2), ..., d(1,n-1)}

2nd call with (range_filter = min{d(0,n-1), d(1,n-1)}, radius = inf), get result distances like this:

{d(0,n), d(0,n+1), d(0,n+2), ..., d(0,2n-1), d(1,n), d(1,n+1), d(1,n+2), ..., d(1,2n-1)}

3rd call with (range_filter = min{d(0,2n-1), d(1,2n-1)}, radius = inf), get result distances like this:

{d(0,2n), d(0,2n+1), d(0,2n+2), ..., d(0,3n-1), d(1,2n), d(1,2n+1), d(1,2n+2), ..., d(1,3n-1)}

...

The result of each iteration will have some duplication with the result of previous iteration, user need do duplication check and remove them.

Compatibility, Deprecation, and Migration Plan(optional)

There is no interface change and This is a new functionality, there is no compatibility issue.

Test Plan(required)

Knowhere

- Add new unittest

- Add benchmark to test range search runtime and recall

- Add benchmark to test range search QPS

There is no public dataset for range search. I have created range search data set based on sift1M and glove200.

You can find them in NAS:

- test/milvus/ann_hdf5/binary/sift-4096-hamming-range.hdf5

- base dataset and query dataset are identical with sift1m

- radius = 291.0

- result length for each nq is different

- total result num 1,063,078

- test/milvus/ann_hdf5/sift-128-euclidean-range.hdf5

- base dataset and query dataset are identical with sift1m

- radius = 186.0 * 186.0

- result length for each nq is different

- total result num 1,054,377

- test/milvus/ann_hdf5/sift-128-euclidean-range-multi.hdf5

- base dataset and query dataset are identical with sift1m

- ground truth IDs and Distances are identical with sift1m

- each nq's radius is set to the last ground truth distance

- result length for each nq is 100

- total result num 1,000,000

- test/milvus/ann_hdf5/glove-200-angular-range.hdf5

- base dataset and query dataset are identical with glove200

- radius = 0.52

- result length for each nq is different

- total result num 1,060,888

Segcore

- Add new range search unittest

Milvus

- use sift1M/glove200 dataset to test range search (radius = max_float / -1.0)

- reuse search testcases to test range search

- use sift1M dataset to test range search, we expect to get identical result results as search

Rejected Alternatives(optional)

...

The project implementation of the previous proposal will be much more complicated than is too complicated to achieve comparing with current proposal.

Cons:

Adv.

- Get all range search result in one call

Cons:

- Need

- Knowhere will add new API QueryByRange() and parameter legacy check API CheckRangeSearch()

- Because the output format of range search is not unified with search, we need add new structure SubRangeSearchResult for range search

- Because range search returns all result (not topk), we need implement Merge operation for chunk, segment, query node and proxy

- Every Merge operation will make the result size more bigger, system memory usage cannot be estimated, the GRPC bandwidth between server and client cannot be estimated

- TOPK is a required parameter in SDK, but not used by range search, it will make user confused

- TOPK is also a required parameter in many search related APIs, in this proposal, we have to change all these APIs to support without TOPK

- Memory explosion caused by Merge

- Many API modification caused by invalid topk parameter

Others

Because the result length of range search from knowhere is variable, knowhere plan to afford another API to return the range search result count for each NQ.

If there is user request to get all range search result in one call, query node team will afford another solution to save range search output of knowhere to S3 directlyCurrent proposal is better than the previous one in all respects.

References(optional)

...

...

Segcore Search Flow

...