Current state: ["Under DiscussionApproved"]

ISSUE: #17599

PRs:

Keywords: search, range search, radius, queryrange filter

Released:

Summary(required)

At presentBy now, the behavior of Query() of "search" in Milvus is to return returning TOPK most similar sorted results for each queried vector to be queried.

The purpose of this This MEP is about to realize another query function. The user specifies a query "radius", and Milvus queries and returns all results with distance better than this "radius" ("< radius" for "L2"; "> radius" for "IP")

This function can be considered as a "superset" of the existing query function, because if you sort the results of range search and take the first `topk` results, they will be identical with the return result of the existing query function.

The result output of this MEP is different from the original query result. The original query result is with fixed length `nq * topk`, while the return result of range search is variable length. In addition to `IDs` / `distances`, `lims` is also returned to record the offset of the query result of each vector in the result set. Another MEP pagination will uniformly process the results of `Query` and `QueryByRange` and return them to the client, so the processing of the returned results is not within the scope of this MEP discussion.

Motivation(required)

...

a functionality named "range search". User specifies a range scope -- including radius and range filter (optional), Milvus does "range search", and also returns TOPK sorted results with distance falling in this range scope.

- param "radius" MUST be set, param "range filter" is optional

- falling in range scope means

| Metric type | Behavior |

|---|---|

| IP | radius < distance <= range_filter |

| L2 and other metric types | range_filter <= distance < radius |

Motivation(required)

Many users request the "range search" functionality. With this capability, user can

- get results with distance falling in a range scope

- get search results more than 16384

Public Interfaces(optional)

- No interface change in Milvus and all SDKs

We reuse the SDK interface `QuerySearch()` to realize the function of range search, so the interface of Milvus and all SDKs need not to be changed. We only need add "radius" information to params. When to do "range search". Only add 2 more parameters "radius" and "range_filter" into params.

When param "radius" is specified, the "limit" setting is ignored, Milvus does range search; otherwise, Milvus does search.

As shown in the following figure, set "range_filter": 1.0, "radius": 888" 2.0 in search_ params.params.

| Code Block | ||

|---|---|---|

| ||

default_index = {"index_type": "HNSW", "params":{"M": 48, "efConstruction": 500}, "metric_type": "L2"}

collection.create_index("float_vector", default_index)

collection.load()

search_params = {"metric_type": "L2", "params": {"ef": 32, "range_filter": 1.0, "radius": 8882.0}}

res = collection.search(vectors[:nq], "float_vector", search_params, limit, "int64 >= 0") |

- Need add new interface `QueryByRange()` 3 new interfaces in knowhere

| Code Block | ||

|---|---|---|

| ||

// range search parameter legacy check virtual bool CheckRangeSearch(Config& cfg, const IndexType type, const IndexMode mode); // range search virtual DatasetPtr QueryByRange(const DatasetPtr& dataset, const Config& config, const faiss::BitsetView bitset); |

One proposal is not to add new interface QueryByRange(), but reusing interface Query() for range search. Considering the implementation in Knowhere, we don't accept this proposal. This is because not all types of index support range search.

By now, Knowhere can support 13 types of index:

BinaryIDMAP, BinaryIVF

Design Details(required)

Describe the new thing you want to do in appropriate detail. This may be fairly extensive and have large subsections of its own. Or it may be a few sentences. Use judgment based on the scope of the change.

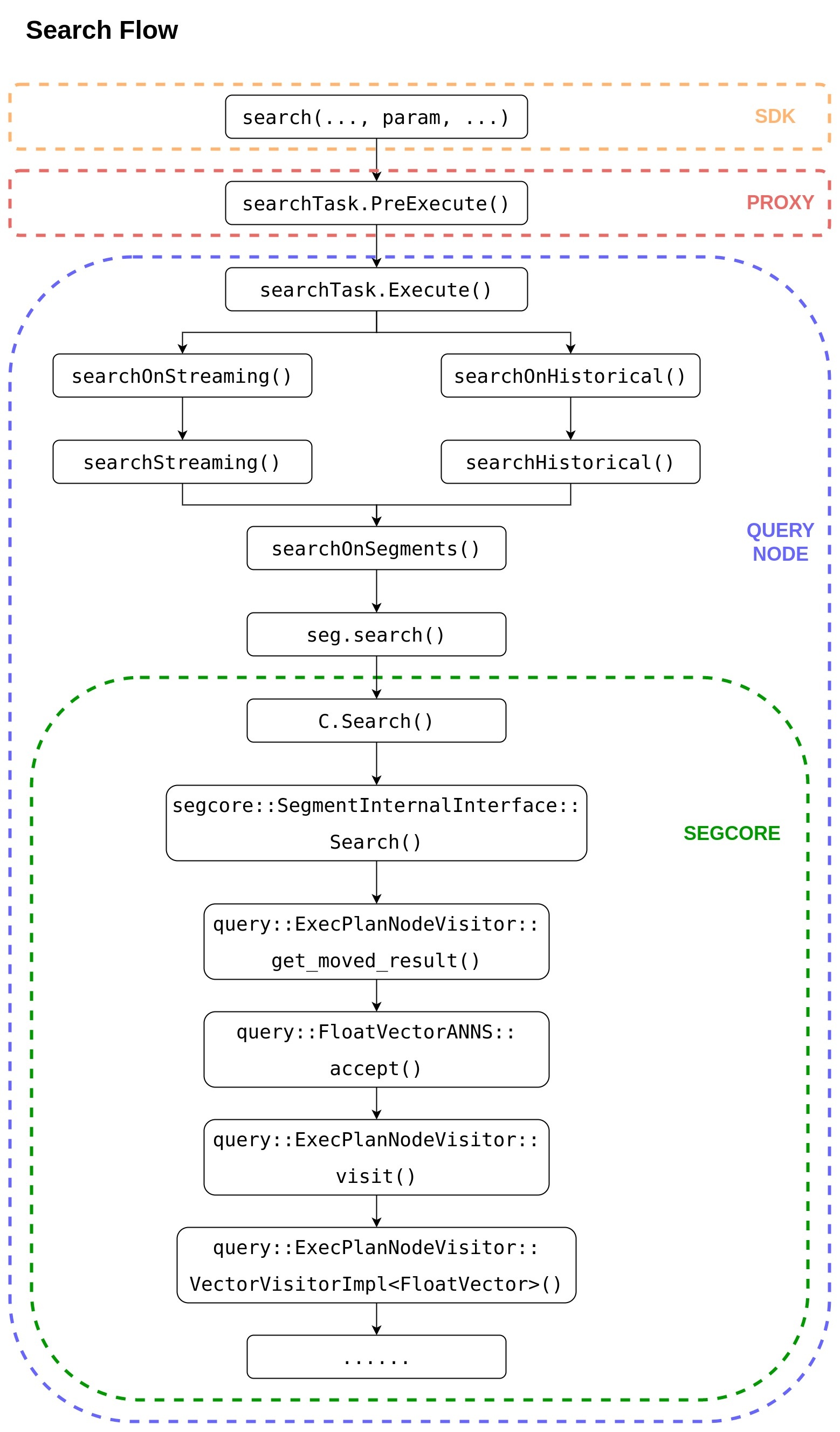

下图为一个 Search 请求从 SDK 到 SEGCORE 的完整调用栈,range search 完全复用该调用栈,不需要做任何改动。range search 只需要将参数 "radius" 在 SDK 端放入查询参数 "param" 中。

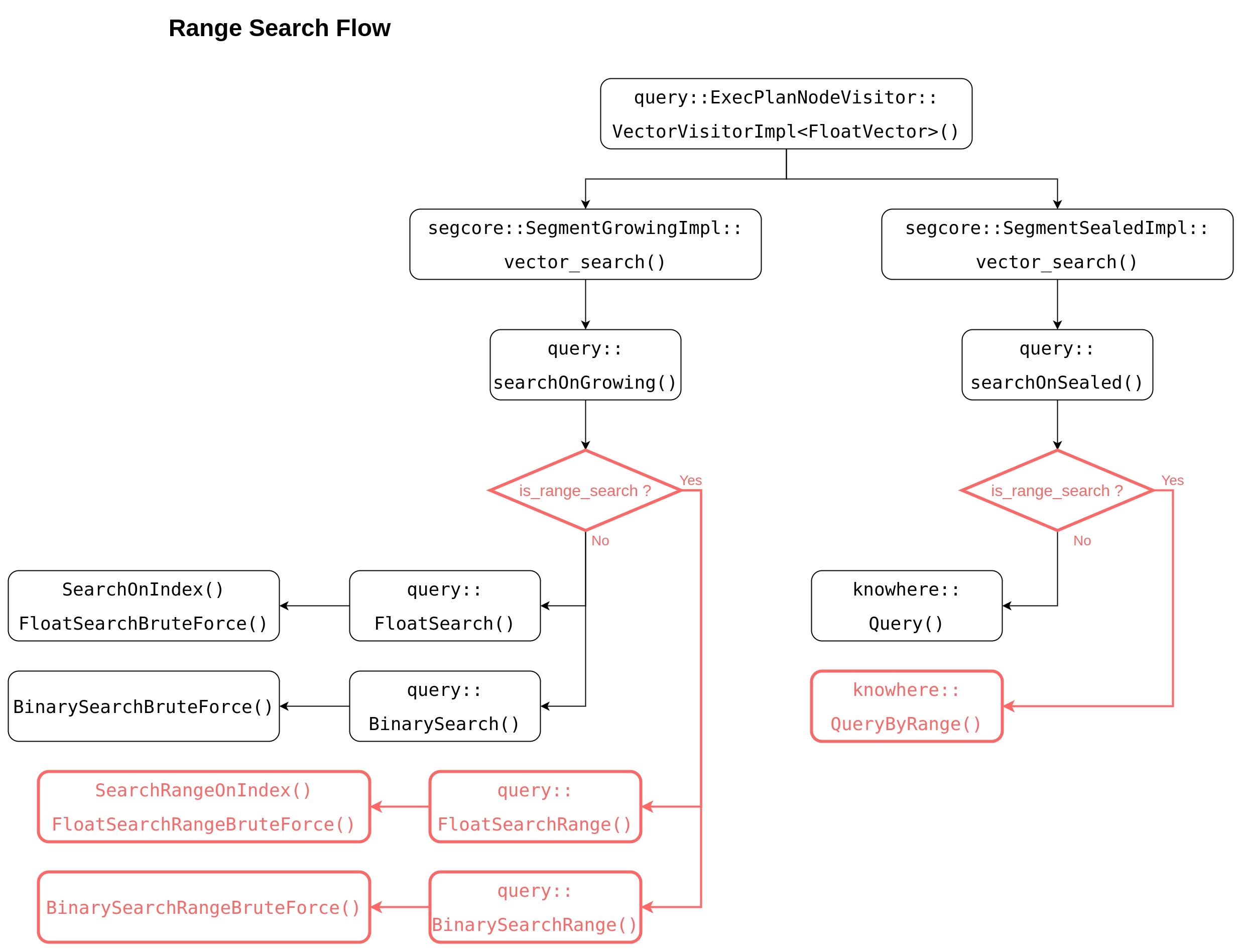

下图为 SEGCORE 内部向量查询时的调用栈示意图,黑色部分为现已实现的功能。

对于 sealed segment,要实现 range search 功能需要 knowhere 提供 QueryByRange 功能;

对于 growing segment 时,由于没有创建 index ,所以无法用 knowhere IDMAP 实现的暴搜功能,只能自己额外实现了暴搜的全套逻辑。若要实现 range search,需要另外实现红色部分所示的功能函数。

另一解决方案是,knowhere 提供一种新的 IDMAP 索引,该索引不需要插入向量数据,只需要指定向量数据的外部内存地址。growing segment 在查询时可临时生成该种索引,继而调用 IDMAP 提供的 Query & QueryByRange 接口,该索引在用完后也即刻销毁。此方案没有额外的内存开销,但是否可行需进一步调研。

查询结果的处理

Query 返回的查询结果有2种,一种为 SubSearchResult,用于存放 segment 中每个 chunk 的查询结果。

需要添加成员变量 "is_range_search_" 和 "lims_",以及成员函数 "merge_range()" 和 "merge_range_impl()"。

| Code Block | ||

|---|---|---|

| ||

class SubSearchResult {

... ...

static SubSearchResult

merge(const SubSearchResult& left, const SubSearchResult& right);

void

merge(const SubSearchResult& sub_result);

static SubSearchResult

merge_range(const SubSearchResult& left, const SubSearchResult& right); // <==== new added

void

merge_range(const SubSearchResult& sub_result); // <==== new added

private:

template <bool is_desc>

void

merge_impl(const SubSearchResult& sub_result);

void

merge_range_impl(const SubSearchResult& sub_result); // <==== new added

private:

bool is_range_search_; // <==== new added

int64_t num_queries_;

int64_t topk_;

int64_t round_decimal_;

MetricType metric_type_;

std::vector<int64_t> seg_offsets_;

std::vector<float> distances_;

std::vector<size_t> lims_; // <==== new added |

另一种为 SearchResult,用于存放该 segment 的查询结果。

需要添加成员变量 "is_range_search_" 和 "lims_"。

| Code Block | ||

|---|---|---|

| ||

struct SearchResult {

... ...

public:

int64_t total_nq_;

int64_t unity_topK_;

void* segment_;

// first fill data during search, and then update data after reducing search results

bool is_range_search_; // <==== new added

std::vector<float> distances_;

std::vector<int64_t> seg_offsets_;

std::vector<size_t> lims_; // <==== new added

... ...

}; |

在 Query node 中还需要实现多个 segment 之间的 reduce_range();

在 Proxy 中还需要实现多个 SearchResult 之间的 reduce_range();

...

// brute force range search

static DatasetPtr

BruteForce::RangeSearch(const DatasetPtr base_dataset, const DatasetPtr query_dataset, const Config& config, const faiss::BitsetView bitset); |

Design Details(required)

Knowhere

Index types and metric types supporting range search are listed below:

| IP | L2 | HAMMING | JACCARD | TANIMOTO | SUBSTRUCTURE | SUPERSTRUCTURE | |

|---|---|---|---|---|---|---|---|

| BIN_IDMAP | |||||||

| BIN_IVF_FLAT | |||||||

| IDMAP | |||||||

| IVF_FLAT | |||||||

| IVF_PQ | |||||||

| IVF_SQ8 | |||||||

| HNSW | |||||||

| ANNOY | |||||||

| DISKANN |

If call range search API with unsupported index types or unsupported metric types, exception will be thrown out.

In Knowhere, two new parameters "radius" and "range_filter" are passed in via config, and range search will return all un-sorted results with distance falling in this range scope.

| Metric type | Behavior |

|---|---|

| IP | radius < distance <= range_filter |

| L2 or other metric types | range_filter <= distance < radius |

Knowhere run range search in 2 steps:

- pass param "radius" to thirdparty to call their range search APIs, and get result

- if param "range_filter " is set, filter above result and return; if not, return above result directly

We add 3 new APIs CheckRangeSearch(), QueryByRange() and BruteForce::RangeSearch() to support range search.

- CheckRangeSearch()

This API is used to do range search parameter legacy check. It will throw exception when parameter legacy check fail.

The valid scope for "radius" is defined as: -1.0 <= radius <= float_max

| metric type | range | similar | not similar |

|---|---|---|---|

| L2 | [0, inf) | 0 | inf |

| IP | [-1, 1] | 1 | -1 |

| jaccard | [0, 1] | 0 | 1 |

| tanimoto | [0, inf) | 0 | inf |

| hamming | [0, n] | 0 | n |

2. QueryByRange()

This API returns all unsorted results with distance falling in the specified range scope.

| PROTO | virtual DatasetPtr |

INPUT | Dataset { Config { knowhere::meta::RADIUS: - knowhere::meta::RANGE_FILTER: - } |

OUTPUT | Dataset { |

LIMS is with length "nq+1", it's the offset prefix sum for result IDS and result DISTANSE. The length of IDS and DISTANCE are the same but variable.

Suppose N queried vectors are with label: {0, 1, 2, ..., n-1}

The result counts for each queried vectors are: {r(0), r(1), r(2), ..., r(n-1)}

Then the data in LIMS will be like this: {0, r(0), r(0)+r(1), r(0)+r(1)+r(2), ..., r(0)+r(1)+r(2)+...+r(n-1)}

The total range search result num is: LIMS[nq]

The range search result for each query vector is: IDS[lims[n], lims[n+1]) and DISTANCE[lims[n], lims[n+1])

The memory used for IDS, DISTANCE and LIMS are allocated in Knowhere, they will be auto-freed when Dataset deconstruction.

3. BruteForce::RangeSearch()

This API does range search for no-index dataset, it returns all unsorted results with distance "better than radius" (for IP: > radius; for others: < radius).

| PROTO | static DatasetPtr |

INPUT | Dataset { Dataset { Config { knowhere::meta::RADIUS: - knowhere::meta::RANGE_FILTER: - } |

OUTPUT | Dataset { |

The output is as same as QueryByRange().

Segcore

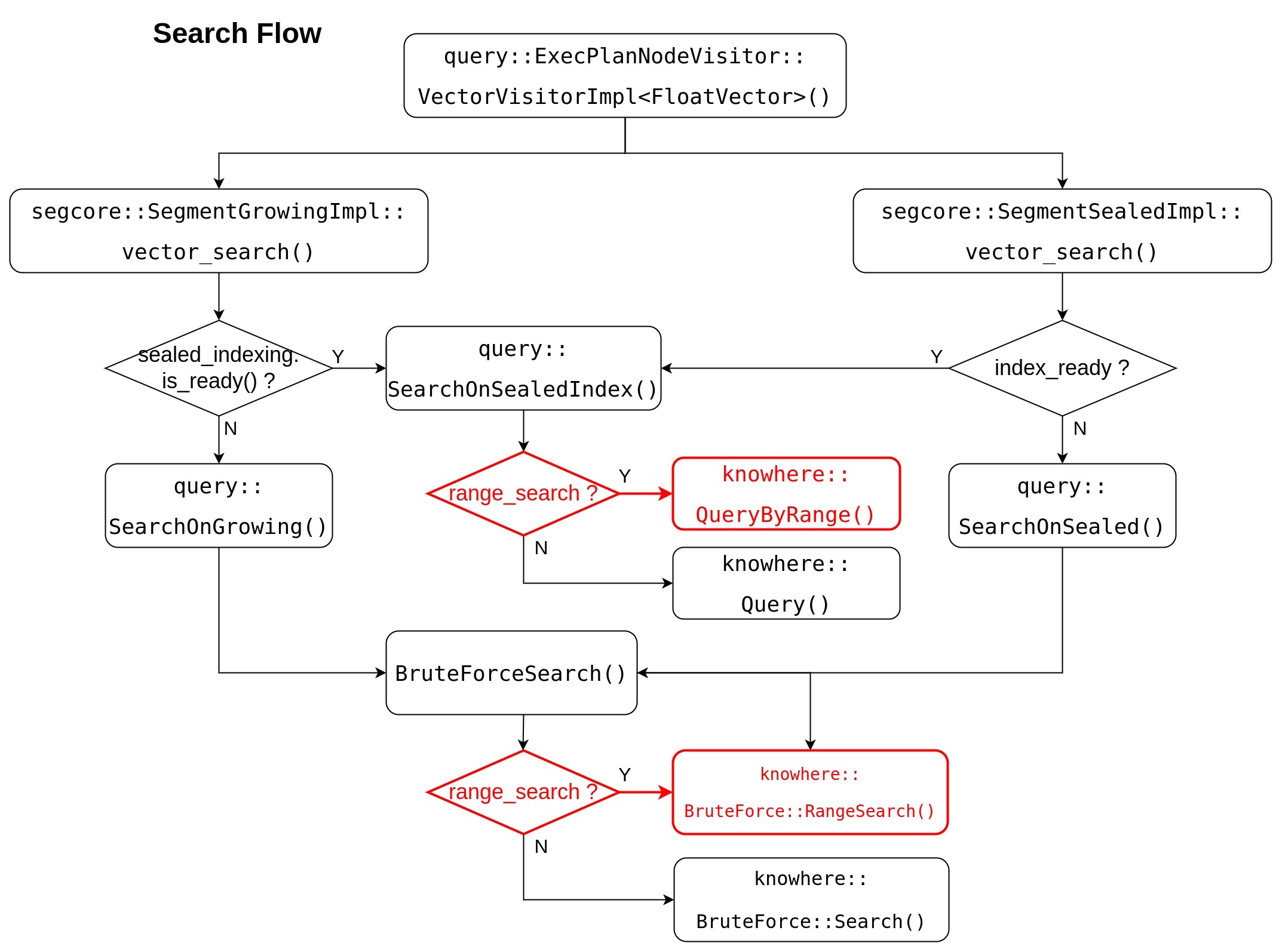

Segcore search flow will be updated as this flow chart, range search related change is marked RED.

Segcore uses radius parameter's existence to decide whether to run search, or to run range search.

- when param "radius" is set, range search is called;

- otherwise, search is called.

For API query::SearchOnSealedIndex() and BruteForceSearch(), they do like following:

- pass radius parameters (radius / range_filter) to knowhere

- get all unsorted range search result from knowhere

- for each NQ's results, do heap-sort

- return result Dataset with TOPK results

Whatever do range search or search, the output structure are same:

- query::SearchOnSealedIndex() => SearchResult

- BruteForceSearch() => SubSearchResult

Both SearchResult and SubSearchResult contain TOPK sorted result for each NQ.

- seg_offsets_: with length "nq * topk", -1 is filled in when no enough result data

- distances_: with lengh "nq * topk", data is undefined when no enough result data

Milvus

Range search completely reuses the call stack from SDK to segcore.

How to get more than 16384 range search results ?

With this solution, user can get maximum 16384 range search results in one call.

If user wants to get more than 16384 range search results, they can call range search multiple times with different range_filter parameter (use L2 as an example)

- NQ = 1

1st call with (range_filter = 0.0, radius = inf), get result distances like this:

{d(0), d(1), d(2), ..., d(n-1)}

2nd call with (range_filter = d(n-1), radius = inf), get result distances like this:

{d(n), d(n+1), d(n+2), ..., d(2n-1)}

3rd call with (range_filter = d(2n-1), radius = inf), get result distances like this:

{d(2n), d(2n+1), d(2n+2), ..., d(3n-1)}

...

- NQ > 1 (suppose NQ = 2)

1st call with (range_filter = 0.0, radius = inf), get result distances like this:

{d(0,0), d(0,1), d(0,2), ..., d(0,n-1), d(1,0), d(1,1), d(1,2), ..., d(1,n-1)}

2nd call with (range_filter = min{d(0,n-1), d(1,n-1)}, radius = inf), get result distances like this:

{d(0,n), d(0,n+1), d(0,n+2), ..., d(0,2n-1), d(1,n), d(1,n+1), d(1,n+2), ..., d(1,2n-1)}

3rd call with (range_filter = min{d(0,2n-1), d(1,2n-1)}, radius = inf), get result distances like this:

{d(0,2n), d(0,2n+1), d(0,2n+2), ..., d(0,3n-1), d(1,2n), d(1,2n+1), d(1,2n+2), ..., d(1,3n-1)}

...

The result of each iteration will have some duplication with the result of previous iteration, user need do duplication check and remove them.

Compatibility, Deprecation, and Migration Plan(optional)

...

This is a new functionality, there is no compatibility issue.

Test Plan(required)

Describe in a few sentences how the MEP will be tested. We are mostly interested in system tests (since unit tests are specific to implementation details). How will we know that the implementation works as expected? How will we know nothing broke?

已为 range search 生成了基准测试数据集 sift-128-eucliean-range.hdf5 和 sift-4096-hamming-range.hdf5

Rejected Alternatives(optional)

If there are alternative ways of accomplishing the same thing, what were they? The purpose of this section is to motivate why the design is the way it is and not some other ways.

References(optional)

...

Knowhere

- Add new unittest

- Add benchmark to test range search runtime and recall

- Add benchmark to test range search QPS

There is no public dataset for range search. I have created range search data set based on sift1M and glove200.

You can find them in NAS:

- test/milvus/ann_hdf5/binary/sift-4096-hamming-range.hdf5

- base dataset and query dataset are identical with sift1m

- radius = 291.0

- result length for each nq is different

- total result num 1,063,078

- test/milvus/ann_hdf5/sift-128-euclidean-range.hdf5

- base dataset and query dataset are identical with sift1m

- radius = 186.0 * 186.0

- result length for each nq is different

- total result num 1,054,377

- test/milvus/ann_hdf5/sift-128-euclidean-range-multi.hdf5

- base dataset and query dataset are identical with sift1m

- ground truth IDs and Distances are identical with sift1m

- each nq's radius is set to the last ground truth distance

- result length for each nq is 100

- total result num 1,000,000

- test/milvus/ann_hdf5/glove-200-angular-range.hdf5

- base dataset and query dataset are identical with glove200

- radius = 0.52

- result length for each nq is different

- total result num 1,060,888

Segcore

- Add new range search unittest

Milvus

- use sift1M/glove200 dataset to test range search (radius = max_float / -1.0), we expect to get identical results as search

Rejected Alternatives(optional)

The previous proposal of this MEP is let range search return all results with distances better than a "radius".

The project implementation of the previous proposal is too complicated to achieve comparing with current proposal.

Adv.

- Get all range search result in one call

Cons:

- Need implement Merge operation for chunk, segment, query node and proxy

- Memory explosion caused by Merge

- Many API modification caused by invalid topk parameter

Others

Because the result length of range search from knowhere is variable, knowhere plan to afford another API to return the range search result count for each NQ.

If there is user request to get all range search result in one call, query node team will afford another solution to save range search output of knowhere to S3 directly.